In this part of tutorial, we are going to setup server-side logic. We will be covering all steps including AWS Services setup, Backend and Frontend. So, without wasting a minute, let's start our tutorial.

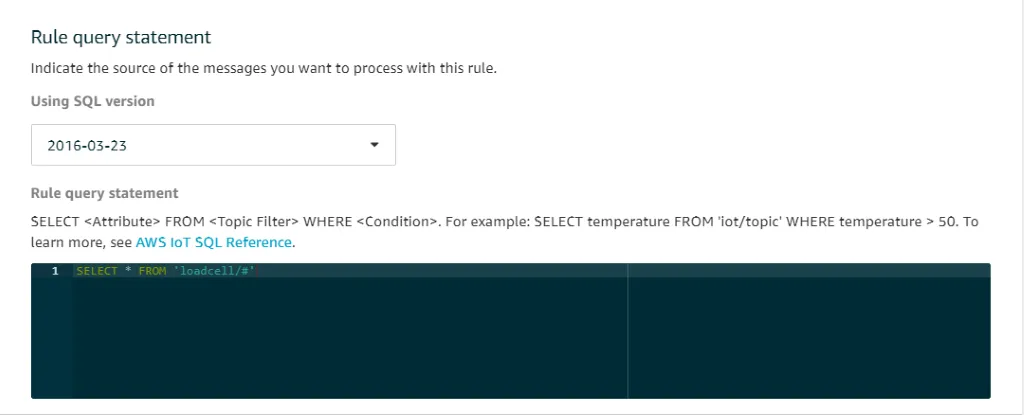

Referring to part 1 of this tutorial, we are able to get data

on AWS IoT Core. It’s time to do something with the data.

Since the main aim of this tutorial is to show real time

graph, we are not going to save the data. For that, we need

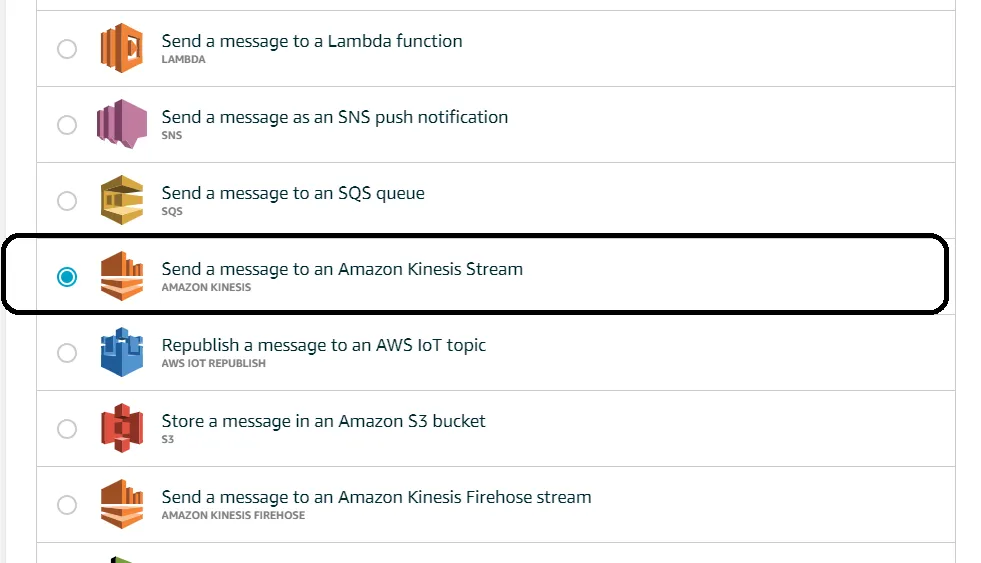

something to process the data real time. So here comes Amazon

Kinesis into play.

Amazon Kinesis Data Streams (KDS) is a massively scalable and durable real-time data streaming service. KDS can continuously capture gigabytes of data per second from hundreds of thousands of sources such as website clickstreams, database event streams, financial transactions, social media feeds, IT logs, and location-tracking events. The data collected is available in milliseconds to enable real-time analytics use cases such as real-time dashboards, real-time anomaly detection, dynamic pricing, and more.

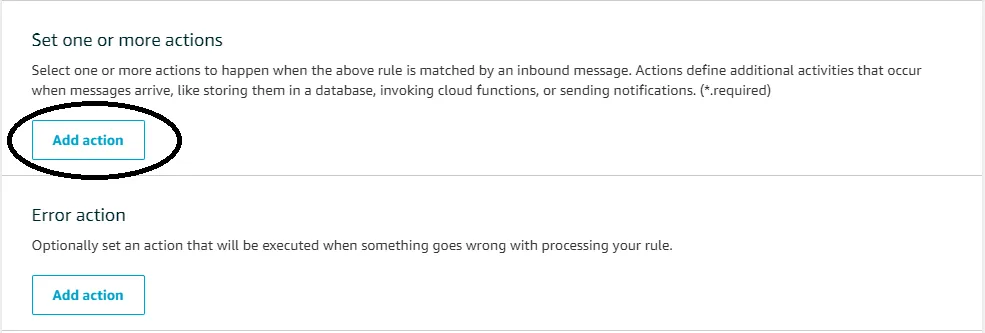

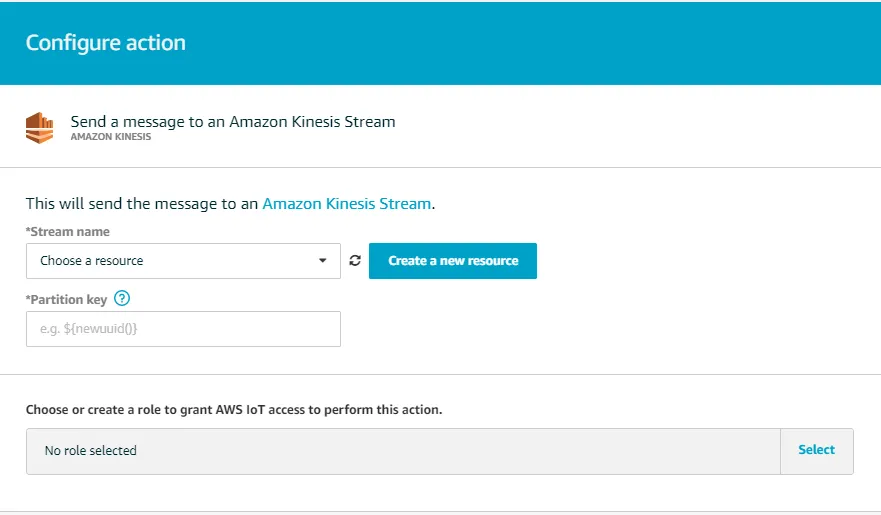

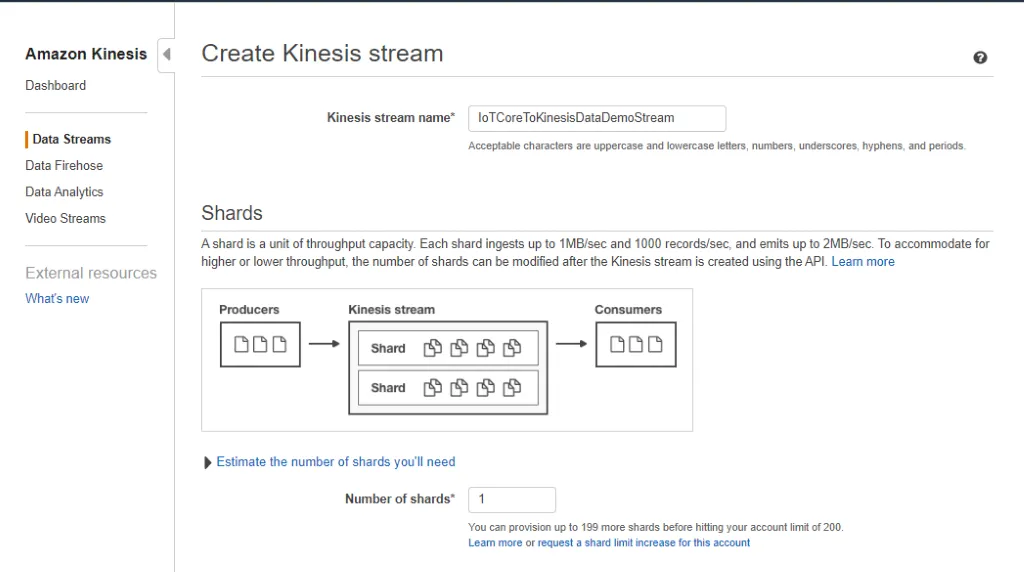

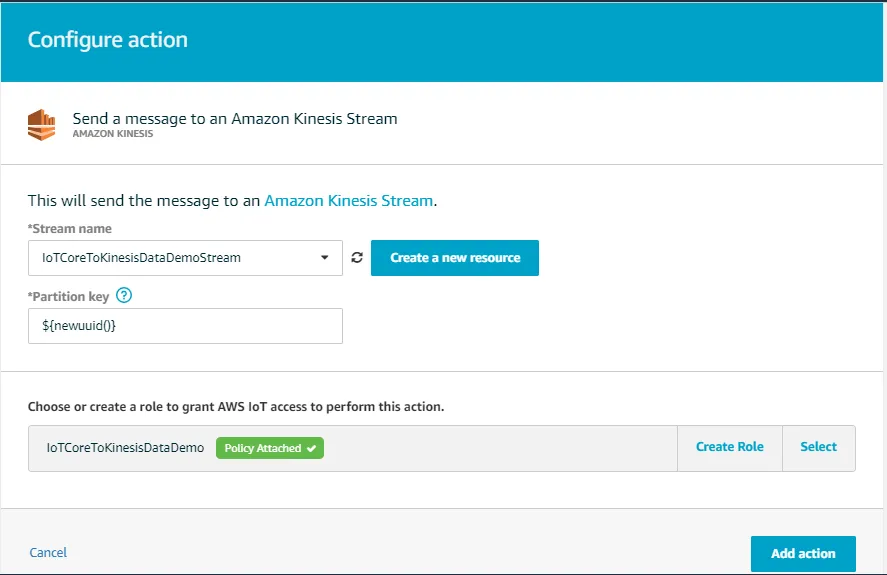

We are going to forward our data to kinesis data streams. IoT Core will act as producer for Kinesis Data Stream.

Follow below steps to create and configure Kinesis Data Stream.

It's time to code Kinesis Consumer to consume data from kinesis streams. We are using KCL (Kinesis Consumer Library) provided by AWS.

There are another two parts in backend.

public static final String SAMPLE_APPLICATION_STREAM_NAME =”{YOU_STREAM_NAME}”;

private static final String SAMPLE_APPLICATION_NAME =”{APPLICATION NAME}”;

In this tutorial, we have seen how to setup backend to process data immediately for showing real-time graphs. Frontend which will update data in realtime by using websockets.

I hope this helps you solve your problem. If you found any issue in executing above code, you can raise an issue on gitlab. Our team will try to solve it as soon as possible.